Summary:

Learn how to seamlessly stream OpenAI responses in NextJS using Vercel’s AI SDK. With a simple integration process outlined in this guide, developers can efficiently harness the power of real-time AI generation, enhancing user experiences and workflow efficiency.

Introduction

In today’s rapidly evolving digital landscape, integrating advanced AI capabilities has become imperative for companies striving to stay competitive. As an OpenAI development company, harnessing the power of cutting-edge technologies like NextJS to streamline the process of generating and managing OpenAI responses presents a multitude of advantages.

From enhancing user experience to optimizing workflow efficiency, the expertise of an OpenAI development company can profoundly impact the seamless integration and utilization of OpenAI’s capabilities within the NextJS framework. In this guide, we delve into the strategies and insights essential for OpenAI development companies to effectively harness the potential of NextJS in streamlining OpenAI responses, thus empowering businesses to unlock new realms of innovation and efficiency.

We know you have been frustrated and have been surfing for streaming OpenAI responses in NextJS. There are many ways to stream OpenAI response and also, a lot to do after the stream that parses the data to make it readable.

Note: We will use NextJS and Vercel AI SDK throughout the blog.

What is Next.js?

Next.js is a React framework for building full-stack web applications. You use React Components to build user interfaces, and Next.js for additional features and optimizations.

Under the hood, Next.js also abstracts and automatically configures tooling needed for React, like bundling, compiling, and more. This allows you to focus on building your application instead of spending time with configuration.

Whether you’re an individual developer or part of a larger team, Next.js can help you build interactive, dynamic, and fast React applications. If you’re looking to bring on experienced talent, you can hire a Next JS developer from Toptal to ensure your project is in expert hands, giving you peace of mind and saving valuable time.

What is Vercel?

Vercel is a platform for developers that provides the tools, workflows, and infrastructure you need to build and deploy your web apps faster, without the need for additional configuration.

Vercel supports popular frontend frameworks out-of-the-box, and its scalable, secure infrastructure is globally distributed to serve content from data centers near your users for optimal speeds.

During development, Vercel provides tools for real-time collaboration on your projects such as automatic preview and production environments, and comments on preview deployments.

Vercel’s platform is made by the creators of Next.js, designed for Next.js applications. In conclusion, the relationship between Vercel and Next.js represents a symbiotic partnership that redefines the way we build and deploy web applications. By leveraging the strengths of both platforms, developers can unlock new possibilities and deliver exceptional web experiences that stand out in today’s competitive landscape.

During development, Vercel provides tools for real-time collaboration on your projects such as automatic preview and production environments, and comments on preview deployments.

How can we make streaming easier using Vercel and NextJS?

In June, 2023 Vercel released a new library ai (Vercel AI SDK) which provides Built-in LLM Adapters / Streaming First UI Helpers / Stream Helpers and Callbacks. Since this library streaming OpenAI response has become much easier.

Before diving into an example let me tell you what which node libraries/frameworks does Vercel AI SDK supports.

It supports React/Next.js, Svelte/SvelteKit, with support for Nuxt/Vue coming soon.

Example — Frontend/Backend both in NextJS (A react Framework)

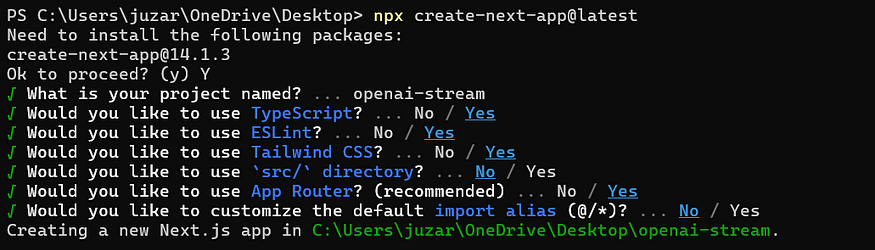

Step 1: Creating NextJS app and installing Vercel AI SDK

npx create-next-app@latest

To install the SDK, enter the following command in your terminal:

npm i aiTo install the Openai, enter the following command in your terminal:

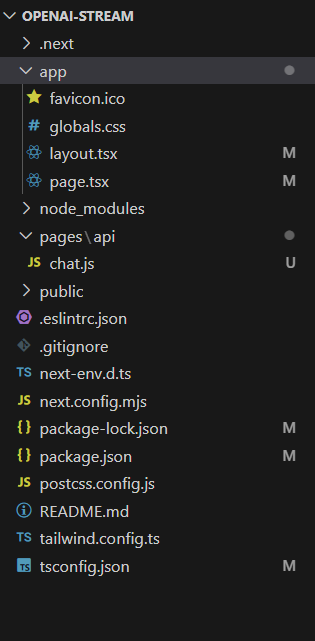

npm i openaiI have just followed the simple/default folder structure of what npx create-next-app@latest created for me for making this demo simple and easy to understand.

Step 2: Create front-end page to stream the response

"use client"

import { useState } from "react";

export default function Home() {

const [question, setQuestion] = useState("")

const [answer, setAnswer] = useState("")

/// function to call the openai router and process the streaming response

async function retriveResponse() {

/// call the route

const response: any = await fetch("/api/chat", {

method: "POST",

body: JSON.stringify({

question

}),

next: { revalidate: 0 }

})

let resptext = "";

const reader = response.body

.pipeThrough(new TextDecoderStream())

.getReader();

/// procees the stream

while (true) {

const { value, done } = await reader.read();

if (done) {

break;

}

resptext += value;

setAnswer(resptext);

}

}

return (

<div>

<label htmlFor="Ask your question"></label>

<input type="text" value={question} onChange={(e)=>setQuestion(e.target.value)}/>

<button onClick={retriveResponse}>Stream Respone</button>

<br />

<p>{answer}</p>

</div>

);

}

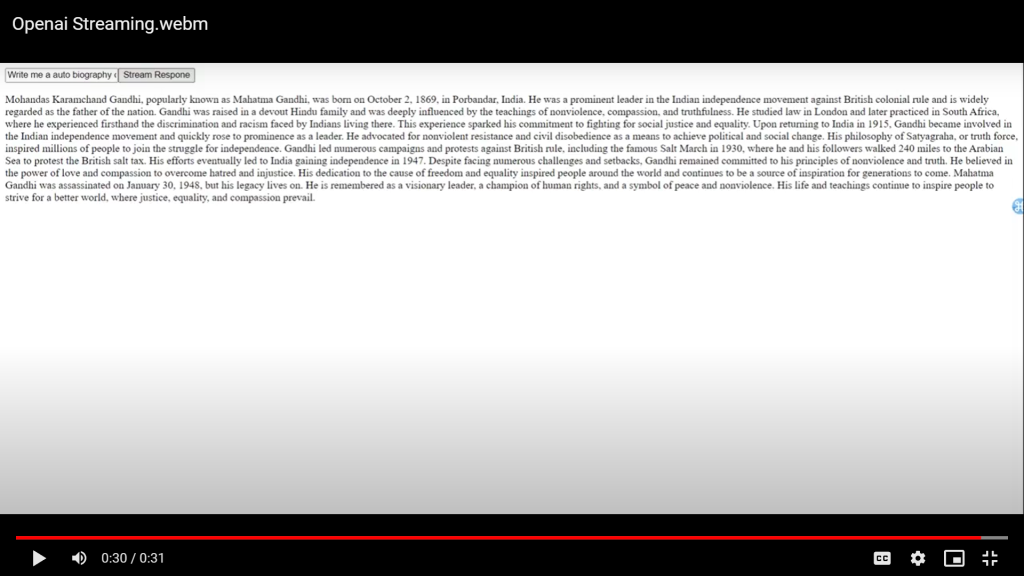

The above component creates an interface where users can input questions, and click a button to send the question to a server-side API (/api/chat), and display the streaming response from the server in real-time

Step 3: Create back-end route to stream the response

import { OpenAIStream, StreamingTextResponse } from "ai";

import OpenAI from "openai";

// Set the runtime to edge for best performance

export const config = {

runtime: "edge",

};

export default async function handler(request, response) {

const { question } = await request.json();

// Fetch the response from the OpenAI API

const openai = new OpenAI({

apiKey: process.env.NEXT_PUBLIC_OPENAI_KEY,

});

const responseFromOpenAI = await openai.chat.completions.create({

model: "gpt-3.5-turbo",

temperature: 0,

top_p: 1,

messages: [{ role: "user", content: question }],

stream: true,

})

try {

// Convert the response into a friendly text-stream

const stream = OpenAIStream(responseFromOpenAI);

// Respond with the stream

return new StreamingTextResponse(stream);

} catch (error) {

return response.send(400).send(error);

}

}

The above code defines a serverless function that receives a question from an HTTP request, sends it to the OpenAI API for processing, and streams back the response to the client.

Step 4: Run the app

npm run devOutput:

References:

https://vercel.com/blog/introducing-the-vercel-ai-sdk

https://platform.openai.com/docs/introduction

To stay informed about the latest developments in the field of AI, keeping an eye on Vercel’s AI documentation is advisable. Be sure to stay tuned for updates and enhancements as Vercel continues to advance its offerings in the AI domain.

30 mins free Consulting

30 mins free Consulting

11 min read

11 min read

Canada

Canada

Hong Kong

Hong Kong

Global

Global

Love we get from the world

Love we get from the world